Randomized clinical trials (RCTs) are the gold standard for making sure health interventions are both safe and effective. However, uninformative clinical studies are recognized as a problem across global health conditions and environments. We consider research uninformative when studies end without confirming there is a finding, regardless of whether the finding is a meaningful health effect from the intervention, or that the intervention clearly had no effect at all. The consequences of uninformative research include decreased impact, wasted money and time, and subjecting participants to treatments with no positive changes from their commitment and investment.

HECT (and DAC) present an opportunity to improve RCTs in global health by introducing new standards for engagement and implementing some pre-existing and some nascent best practices:

- Simulate samples sizes to ensure trials last long enough, but not too long

- Use an analysis plan that is fixed up-front, focusing on commonly used endpoints

- Communicate with participants, families, and communities before, during, and after a study

Incorporating these approaches makes researchers more likely to reach the study goals and decreases the chance of additional studies being needed to address the same question.

Download HECT (now DAC) materials:

a. Introducing Highly Efficient Clinical Trials: a presentation (core concepts generated by Ki partner, MTEk, now Cytel Canada)

b. Primer for principal investigators: a white paper

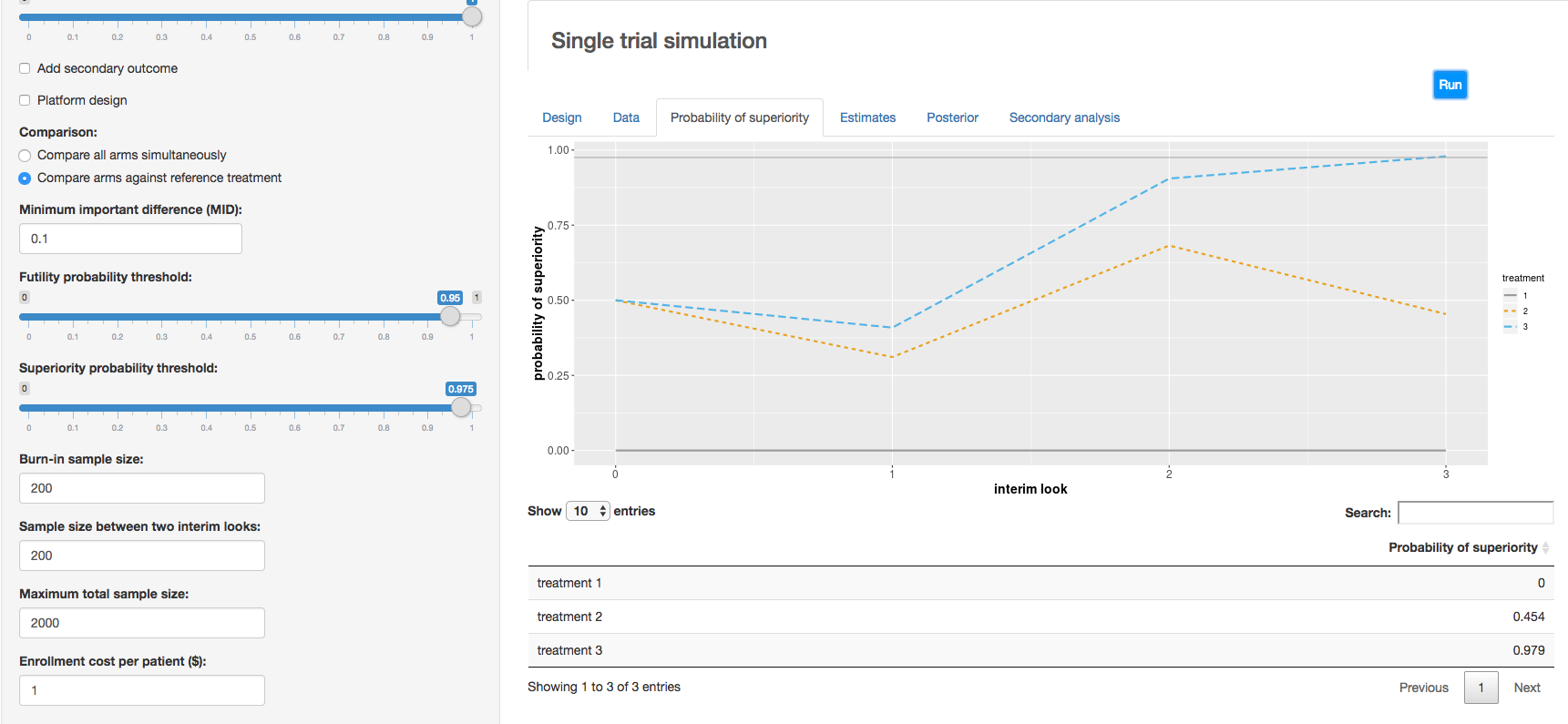

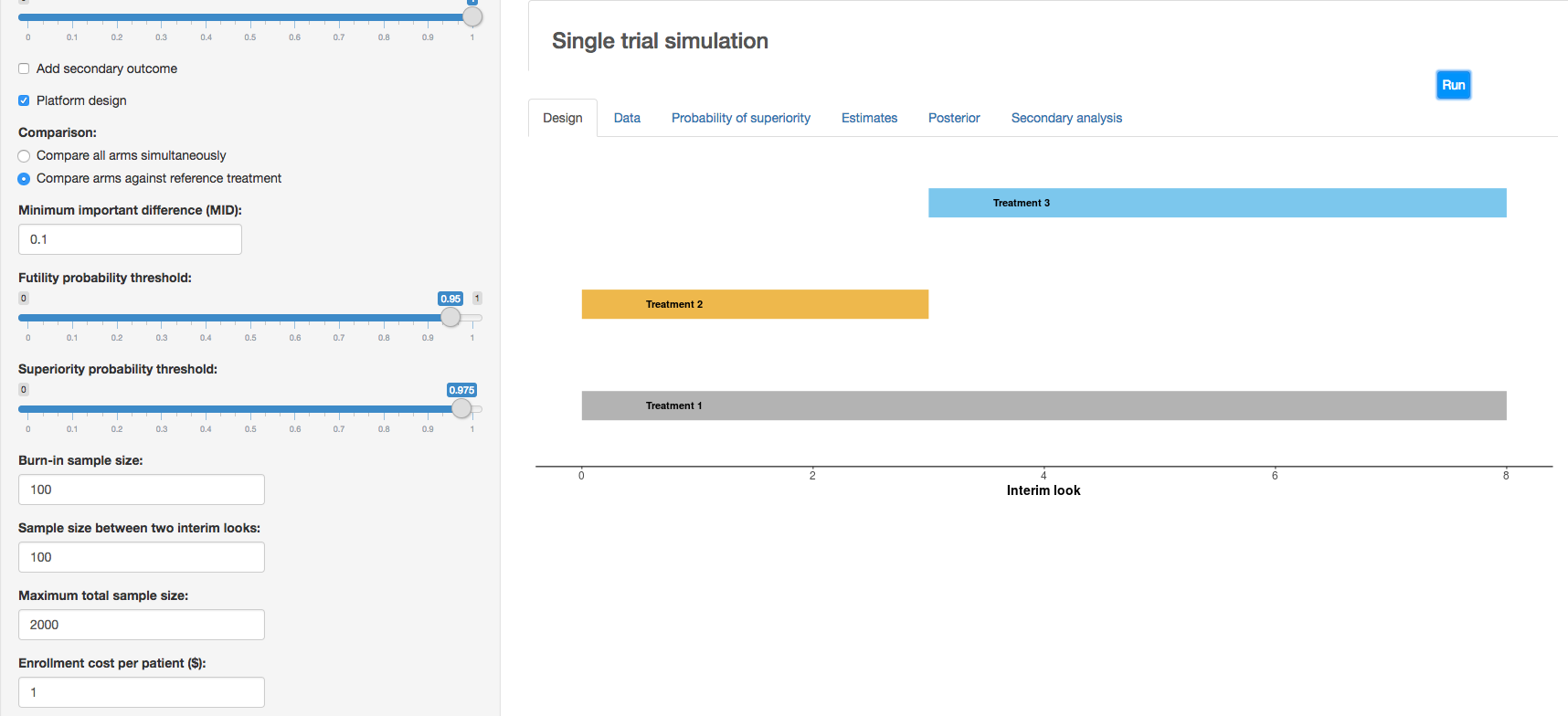

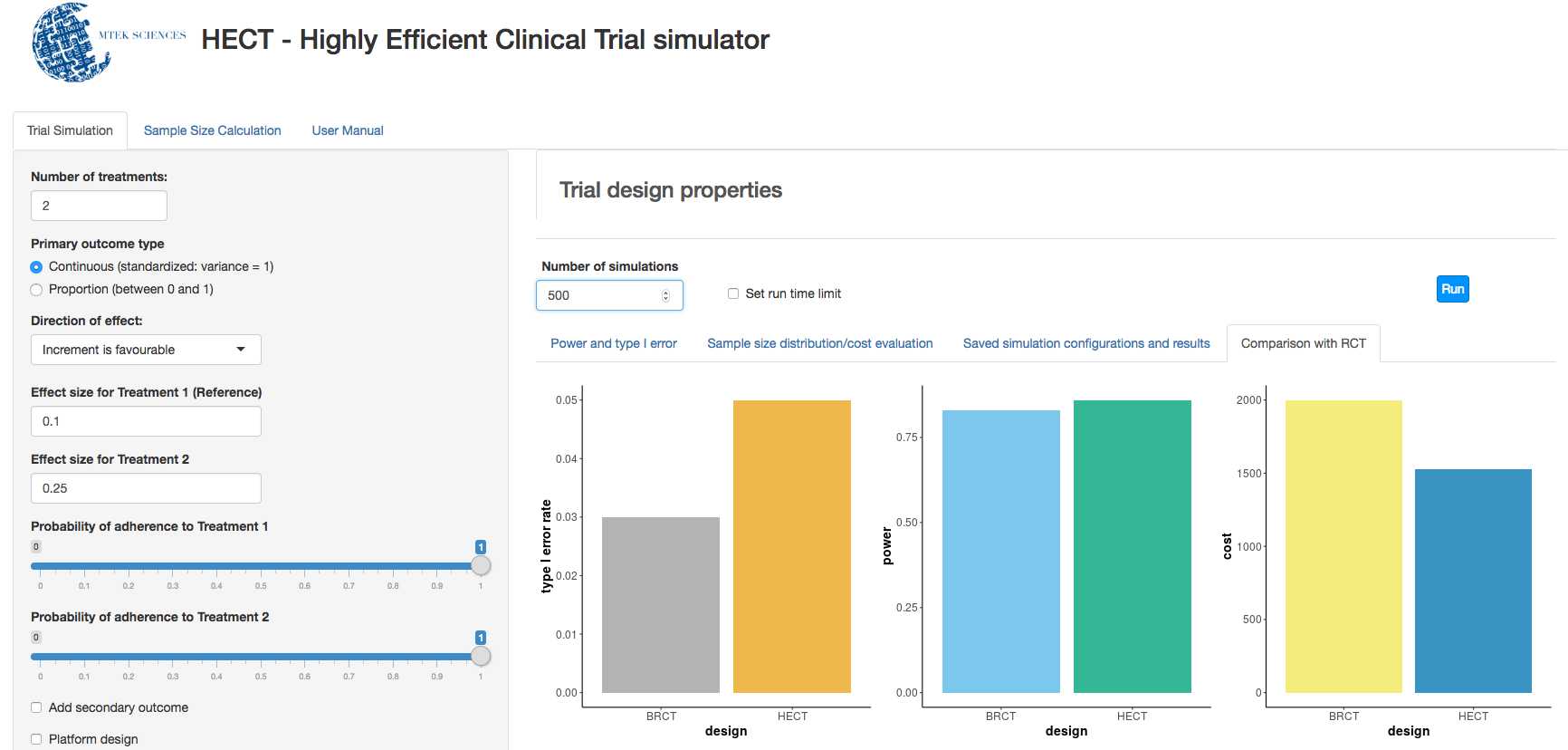

c. Clinical trial simulation: HECT open source software This application allows users to perform adaptive clinical trial simulations to evaluate the pros and cons of a variety of candidate adaptive trial designs. See below for additional explanation of HECT software features.

More about DAC:

Gates Foundation program officers now have the flexibility to offer grantees trial planning grants to institute DAC best practices in their studies. Building upon the HECT principles described above, DAC includes the following concepts:

Efficient clinical research:

a) Uses simulation to identify the best sample size, leading toward the trial ending with insights no sooner or later than is scientifically sound.

b) Answers multiple questions during a single study, sometimes with multiple treatment arms in an adaptive design, and includes interim evaluations.

c) Has generalizable and actionable results, often by identifying target policies beforehand, measuring commonly used endpoints, and performing the study in multiple sites.

d) Engages the community, local health system, and study participants before, during, and after the study.

We have identified a library of best practices that we believe leads to trials ending and resulting in actionable information. Our best practices are informed by years of trial experience, multiple exemplars across a variety of geographies and pathologies, examples of uninformative trials when best practices were absent, and solid science.

START DATE

September 2017

STAGE OF DEVELOPMENT

–

WORKING TEAMS

MTEK Sciences (now Cytel Canada)